Report #49

Project: Lenci Test 7/3/2026

2026-03-07 12:10:24

Description

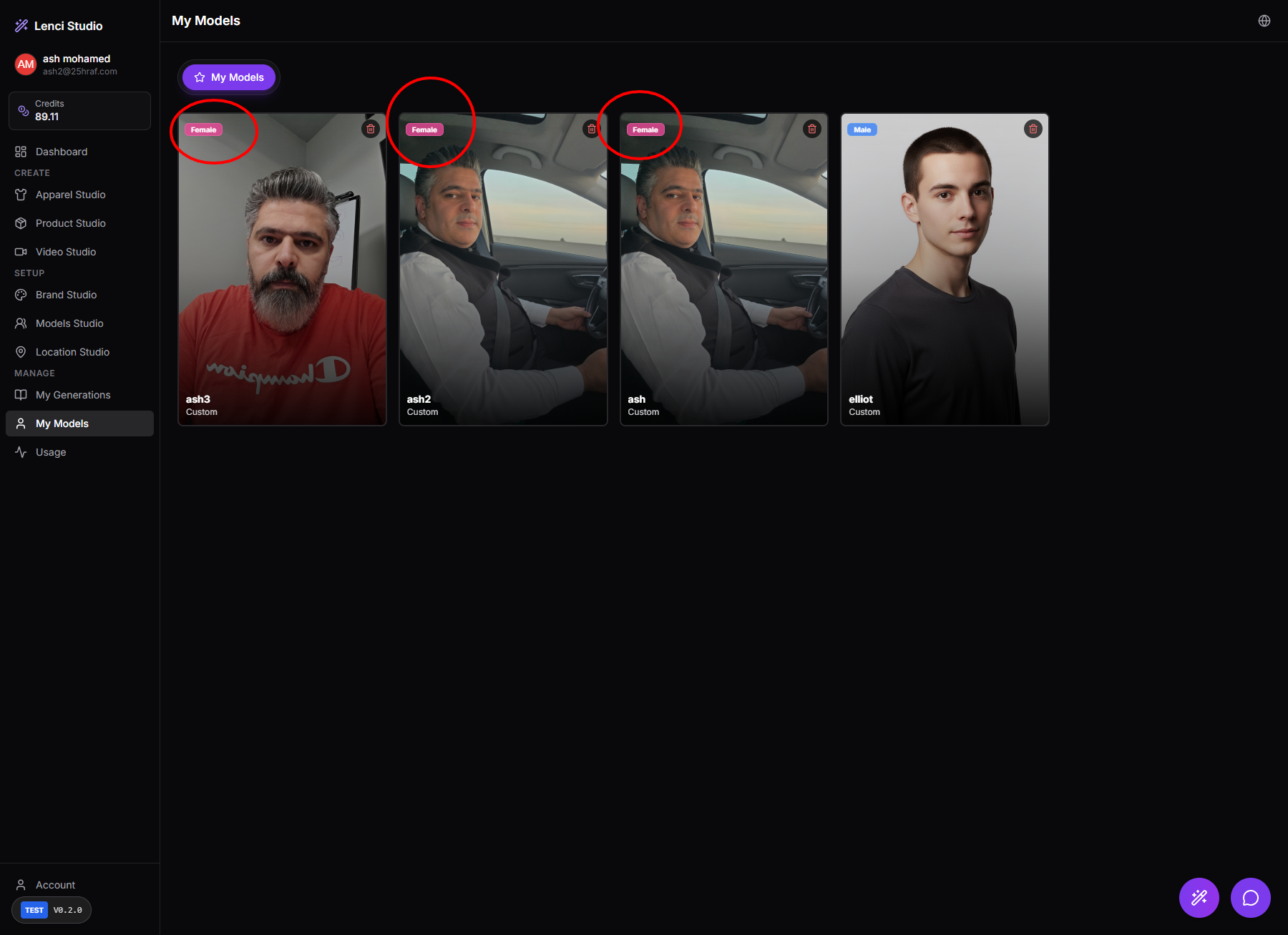

Issue description In My Models, models created through Models Studio → Real Photo Model are automatically labeled as Female, even when the uploaded photos clearly represent a male subject. In the shown case: The generated model cards display the “Female” tag. The photos clearly depict a male person. This misclassification appears consistently across multiple models created from the same user. This indicates that the system’s gender classification logic is incorrect or defaulting to a fixed value, causing inaccurate metadata tagging. Such incorrect labeling can affect: Filtering and categorization in My Models. Prompt conditioning for generation pipelines. Downstream styling or clothing recommendations that depend on gender metadata. Expected behavior The gender label assigned to a model should accurately reflect the uploaded photos. If the system performs automatic gender detection, it should classify the model correctly based on facial/body features. If the system cannot determine gender reliably, it should either: Leave the field unset, or Allow the user to manually choose the gender during model creation. Acceptance criteria Models generated from Real Photo Model do not default to Female. Gender metadata reflects the actual subject in the uploaded images. Automatic gender detection (if used) correctly identifies obvious male/female cases. If detection confidence is low: The system prompts the user to select Male / Female / Neutral. The gender label shown on the model card matches the stored metadata. Filtering by Male or Female in model lists returns only correctly classified models.